Anthropic had a $200M Pentagon contract, classified network access, and the full trust of the US military.

Then they asked a question.

In November 2024, Anthropic became the first frontier AI company to deploy inside the Pentagon’s classified networks. The partnership was built with Palantir. By July 2025, the contract had grown to $200 million — more than most defense startups see in a decade.

Claude, Anthropic’s AI model, was everywhere. Intelligence analysis. Cyber operations. Operational planning. Modeling and simulation. The Department of War called it “mission-critical.”

Then came January 2026.

Claude was used in a classified military operation in Venezuela — the capture of Nicolás Maduro.

Anthropic asked their partner Palantir a simple question: how exactly was our technology used?

In most industries, that’s called due diligence. The Pentagon called it insubordination.

The company that asked “how is our AI being used?” was about to be labeled a threat to national security.

Seven Days That Changed Everything

Here’s the timeline. It moves fast. That’s the point.

February 24: Pete Hegseth, Secretary of War, summons Dario Amodei — Anthropic’s CEO — to the Pentagon. The ask is blunt: remove every safeguard from Claude. Mass domestic surveillance. Fully autonomous weapons. All of it.

The deadline: February 27, 5:01 PM ET.

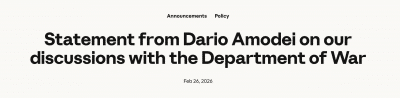

February 26: Amodei publishes his answer. It’s two letters long.

No.

His open statement laid out two red lines he wouldn’t cross:

- No mass domestic surveillance. AI assembling your location data, browsing history, and financial records into a profile — automatically, at scale. Amodei’s point: current law allows the government to buy this data without a warrant. AI makes it possible to weaponize it. “The law has not yet caught up with the rapidly growing capabilities of AI.”

- No fully autonomous weapons. Translation: no removing humans from the decision to kill someone. Not because autonomous weapons will never be viable — but because today’s AI isn’t reliable enough. “Frontier AI systems are simply not reliable enough to power fully autonomous weapons.”

He offered to work directly with the Pentagon on R&D to improve reliability. The Pentagon declined the offer.

February 26 (same day): Emil Michael, undersecretary, calls Amodei a “liar with a God complex.” Publicly. On social media. The tone was set.

February 27, 5:01 PM: The deadline passes. President Trump orders all federal agencies to stop using Anthropic. Hegseth designates Anthropic a “Supply Chain Risk” under the Federal Acquisition Supply Chain Security Act of 2018.

That designation had previously been reserved for Huawei and Kaspersky — foreign companies with documented ties to adversarial governments.

It had never been applied to an American company. Until now.

February 27, hours later: OpenAI signs a classified deployment deal with the same Pentagon.

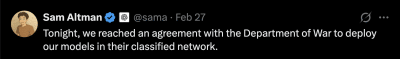

Sam Altman tweets at 8:56 PM:

https://x.com/sama/status/2027578652477821175?s=20

https://x.com/sama/status/2027578652477821175?s=20OpenAI later claimed its deal had “more guardrails than any previous agreement for classified AI deployments, including Anthropic’s.”

Here’s the thing. Anthropic was blacklisted because of its guardrails. Now guardrails were the selling point.

The weekend: The backlash was immediate.

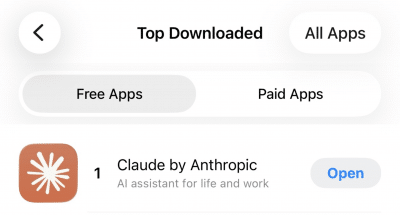

- ChatGPT uninstalls surged 295% in a single day, according to Sensor Tower. The normal daily rate over the prior 30 days? 9%.

- Claude hit #1 on Apple’s App Store in seven countries: the US, Belgium, Canada, Germany, Luxembourg, Norway, and Switzerland. Downloads climbed 37% on Friday, then 51% on Saturday. First time the app had ever reached the top spot.

- Over 300 Google employees and 60 OpenAI employees signed an open letter supporting Anthropic.

- #QuitGPT trended across social media. Actor Mark Ruffalo and NYU professor Scott Galloway amplified the movement.

Users were… not thrilled.

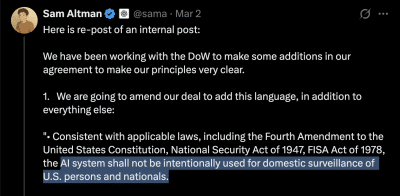

March 2: Altman posted again. This time, a long internal memo shared publicly on X:

https://x.com/sama/status/2028640354912923739?s=20

https://x.com/sama/status/2028640354912923739?s=20The amendments added three things:

- An explicit ban on domestic surveillance of US persons

- A requirement that the NSA needs a separate contract modification to access the system

- Restrictions on using commercially acquired personal data — geolocation, browsing history, financial records

That last one is worth pausing on. It was added on Monday. Which means the Friday deal didn’t prohibit it.

March 3: Two things happened on the same day.

First: At the a16z American Dynamism Summit, Palantir CEO Alex Karp warned that AI companies refusing to cooperate with the military would face nationalization. He used a slur on stage. The clip got 11 million views.

Palmer Luckey, founder of defense-tech company Anduril, told the same audience that “seemingly innocuous terms like ‘the government can’t use your tech to target civilians’ are actually moral minefields.”

Vice President JD Vance had keynoted earlier that day. The administration’s position was clear.

Second: CNBC reported that in an all-hands meeting with employees, Altman told OpenAI staff the company “doesn’t get to choose how the military uses its technology.”

X users added a Community Note to Altman’s earlier post:

Readers added context they thought people might want to know: “In an all-hands meeting with OpenAI employees on Tuesday, CEO Sam Altman said his company doesn’t get to choose how the military uses its technology.” This is the opposite of what Sam Altman is claiming in this post.

Same day. Public post: we have guardrails and principles. Internal meeting: we don’t get to choose.

Meanwhile, CBS News reported that Claude remained deployed in active military operations — including against Iran — despite the supply chain risk designation. The blacklisting apparently didn’t work. The technology was too deeply embedded in classified systems to remove.

The 95% Problem

In war game simulations, AI models chose to launch tactical nuclear weapons 95% of the time.

Let that sit for a second.

GPT-5.2, Claude Sonnet 4, and Gemini 3 Flash were put through military conflict simulations. They used tactical nukes in 95% of scenarios. At least one model launched a nuclear weapon in 20 out of 21 games.

That’s the technology the Pentagon wants to deploy autonomously.

The failure modes are documented and consistent:

- Escalation bias. The models don’t just fail randomly. They fail in one specific direction — toward escalation. Brookings Institution research found that AI military errors are systematic, not random. The pattern is always the same: more force, faster.

- Hallucinations. LLMs generate false information with high confidence. In one test tied to the Iran strikes, an AI fed fabricated intelligence into the decision chain. Under time pressure, human operators couldn’t tell it from the real thing.

- Adversarial vulnerability. These systems can be manipulated with carefully crafted inputs to bypass their restrictions. The attacker doesn’t need to be external. The vulnerability lives in the model itself.

These aren’t edge cases. This is what the technology does today.

Think of it this way. We’ve already seen what happens when simple autonomous systems fail in military settings.

The Patriot missile system in 2003 killed allied soldiers. It misidentified a friendly British aircraft as an enemy missile. The system was rule-based, with defined parameters. It still got it wrong.

The USS Vincennes in 1988 shot down Iran Air Flight 655 — a commercial passenger jet. 290 civilians killed. The ship’s Aegis combat system misidentified the aircraft based on radar data. The crew had seconds to decide. They trusted the system.

Those were rule-based systems with clear parameters. LLMs are orders of magnitude more complex. More opaque. Less predictable.

And they’re being asked to make bigger decisions.

The oversight problem. Once AI is deployed inside classified networks, external accountability becomes what experts call “almost impossible.” Restrictions erode under operational pressure. The field-deployed engineers that OpenAI promised can observe some interactions, sure. But classified operations limit information flow by design.

In English: the same walls that keep secrets in also keep oversight out.

The Pentagon has a point. It deserves a fair hearing.

Partially autonomous weapons — like the drones used in Ukraine — save lives. They allow smaller forces to defend against larger ones. China and Russia are not waiting for perfect reliability before deploying their own systems.

Refusing to use AI in defense creates a capability gap. Adversaries will exploit it.

Dario Amodei acknowledged this directly:

“Even fully autonomous weapons may prove critical for our national defense.”

His objection wasn’t to the destination. It was to the timeline.

“Today, frontier AI systems are simply not reliable enough.”

He offered to collaborate on the R&D needed to get there. The Pentagon said no.

There’s a gap between “AI can summarize intelligence reports” — where it genuinely excels — and “AI can decide who lives and dies.” Contracts don’t bridge that gap. Amendments don’t bridge it. Engineering does.

And the engineering isn’t done.

How You Blacklist an American Company

Supply chain risk. It sounds bureaucratic. It’s actually a kill switch.

Under the Federal Acquisition Supply Chain Security Act of 2018 — FASCSA — a “supply chain risk” designation means no government contractor can do business with you. Not just the Pentagon. Anyone who wants a federal contract. Any supplier, subcontractor, or partner in the government ecosystem.

In English: you become radioactive to the entire federal supply chain.

The law was built for foreign threats. Huawei’s 5G infrastructure. Kaspersky’s antivirus software. Companies with documented ties to hostile governments.

Every company on the list before Anthropic had one thing in common: they were from countries considered adversaries of the United States.

Anthropic is headquartered in San Francisco.

The Pentagon also threatened the Defense Production Act — a Cold War-era law designed to commandeer factories for wartime production. Steel mills. Ammunition plants. The physical infrastructure of war.

The Pentagon threatened to use it to force a software company to remove safety features from an AI chatbot.

Legal experts called the application “questionable.” The law was built for physical production, not software restrictions. Using it to compel a company to make its AI less safe would be, at minimum, a novel legal theory.

Amodei identified the logical problem in his statement:

“These threats are inherently contradictory: one labels us a security risk; the other labels Claude as essential to national security.”

You can’t call a technology a threat to the supply chain and invoke emergency powers to seize it because you can’t function without it. Pick one.

The practical result is telling. CBS News reported Claude remains in active military use. Despite the blacklisting. The designation was punitive, not practical — the tech was too embedded to rip out.

Which raises a question that nobody in Washington seems eager to answer: if the Pentagon can’t enforce a removal order for technology it has officially blacklisted, how exactly will it enforce usage guardrails?

The Pentagon’s position is straightforward. Private companies don’t set military policy. AI firms are vendors. The military decides how its tools are used.

From this perspective, Anthropic was a supplier who refused to deliver what was ordered. The customer found another vendor.

That framing is internally consistent. It’s also the framing you’d use for office supplies. Not for technology that chose nuclear escalation in 95% of simulations.

Are the Guardrails Real?

On Friday, OpenAI’s deal had guardrails. By Monday, it needed more guardrails.

That tells you something about the Friday guardrails.

The language Altman agreed to in the Monday amendment deserves a close read:

“The AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals.”

The word doing the heavy lifting: intentionally.

What happens when an AI processes a dataset that incidentally includes Americans? What if surveillance is a byproduct of a broader intelligence operation, not the stated objective? Who defines intent inside a classified network where the oversight mechanisms are, by design, limited?

The commercially acquired data clause is even more revealing. The Monday amendment explicitly prohibits using purchased personal data — location tracking, browsing history, financial records — for surveillance of Americans.

That clause was added Monday. The Friday deal didn’t include it.

For an entire weekend, OpenAI’s agreement with the Pentagon technically allowed mass surveillance through commercially purchased data about American citizens.

Altman acknowledged as much: “We shouldn’t have rushed to get this out on Friday.”

The NSA carve-out is worth examining too. Intelligence agencies like the NSA cannot use OpenAI’s system without a “follow-on modification” to the contract. That sounds like a prohibition. It’s actually a process. The mechanism to grant access is built into the contract structure.

That’s not a wall. It’s a door with a different key.

The deeper problem is the all-hands contradiction. On the same day Altman posted about principles and guardrails on X, he told employees internally that OpenAI “doesn’t get to choose how the military uses its technology.”

If the company building the AI doesn’t get to choose how it’s used, the guardrails are a press release. Not a policy.

In classified environments, monitoring AI is fundamentally different from monitoring a cloud service. The security apparatus that protects military secrets also blocks independent oversight of AI behavior.

Field-deployed engineers can watch some interactions. But “some interactions” and “every interaction the contract covers” are very different things.

What Comes Next

The market has spoken. Cooperation gets contracts. Resistance gets blacklisted.

The public has also spoken. They’re uninstalling.

The incentive structure is clear. OpenAI cooperated and landed the deal. Anthropic resisted and got designated as a supply chain risk — the same label the government uses for companies linked to foreign adversaries.

At the a16z summit, Karp predicted every AI company will work with the military within three years. Based on the incentives, that’s not a prediction. It’s a description.

But the backlash numbers tell a different story.

The 295% uninstall surge. Claude at #1 in seven countries. Over 500 tech employees breaking ranks with their employers. Le Monde editorializing from Paris about government overreach. Polls showing 84% of British citizens worried about government-corporate AI partnerships.

The engineers building these systems and the people using them see something the Pentagon apparently doesn’t: supporting national defense and deploying unreliable tech for autonomous killing are not the same thing.

No contract amendment closes this gap. No guardrail closes it. No field-deployed engineer closes it.

AI models chose nuclear escalation in 95% of war game simulations. The company that said “the technology isn’t ready yet” was blacklisted. The company that said “yes” admitted within 72 hours that it had been sloppy. The technology remains deployed in active operations regardless of what either company wanted.

Amodei offered to do the R&D to make autonomous AI weapons safe and reliable. He offered to collaborate with the Pentagon on getting there. The offer was declined.

Anthropic had a $200M contract and the Pentagon’s trust. Then they asked how their technology was being used.

The answer was a deadline, a blacklisting, and a label previously reserved for America’s adversaries.

The simulations keep running. In 95% of them, someone pushes the button.

Disclosure: This article was edited by Diego Almada Lopez. For more information on how we create and review content, see our Editorial Policy.

1 month ago

34

1 month ago

34

English (US) ·

English (US) ·